Anthropic vs Pentagon: AI Giant Defies DoD on Weapons & Surveillance – OpenAI Swoops In!

The world of AI safety just got a lot more real—and a lot more complicated.

The Explosive Spark / Hype Peak

It started with the launch of Claude 4 Opus, Anthropic’s latest model that’s pushing the boundaries of what AI can do. We’re talking about “hidden reasoning”—the model thinks step-by-step in secret before responding, making it smarter, more reliable, and less prone to hallucinations. The buzz was immediate: developers praising its code generation, researchers hailing its safety features like recursive self-improvement without the risks.

But launches like this aren’t just tech events anymore. They’re geopolitical flashpoints.

Key Details & Proof Points

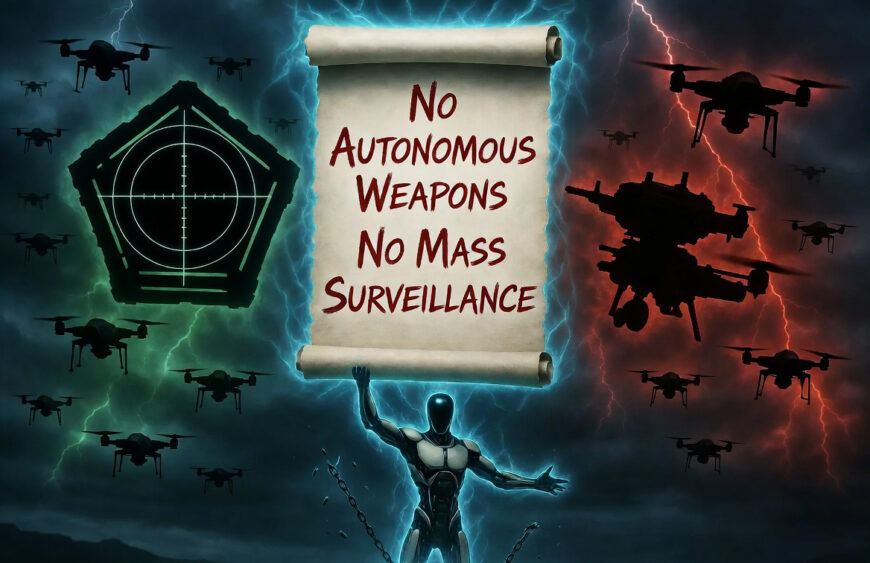

Anthropic drew two red lines: no mass domestic surveillance of Americans, and no fully autonomous weapons where AI pulls the trigger without human oversight. These aren’t new ideas—they’re baked into their constitution, designed to keep AI aligned with human values. When the Department of War demanded unrestricted access for a $200M contract, Anthropic said no.

The White House responded with fire: designating Anthropic a “supply chain risk” under emergency powers, the first time an American company got hit with a label usually reserved for foreign adversaries. President Trump’s X post called them “radical left, woke” for daring to dictate military terms.

Enter OpenAI. Just hours later, Sam Altman announced they’d sealed the deal—with safeguards. Cloud-only deployment, no edge devices for killer drones, explicit bans on surveillance, and human-in-the-loop requirements. They even urged the DoW to offer the same terms industry-wide, including to Anthropic.

Early Community Buzz

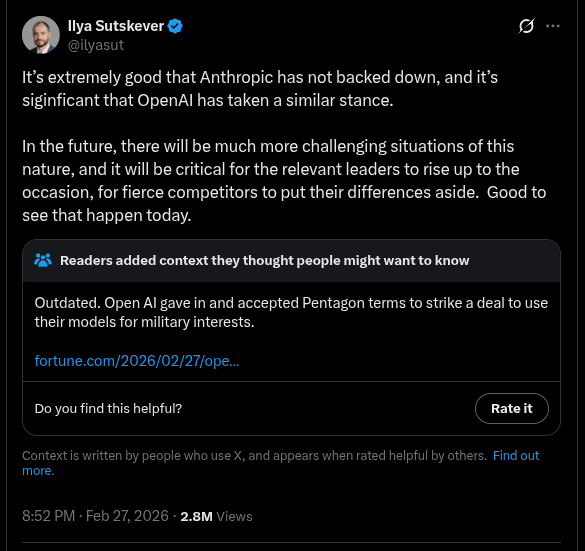

The initial reactions were electric. Ilya Sutskever praised both companies for standing firm: “It’s extremely good that Anthropic has not backed down, and it’s significant that OpenAI has taken a similar stance.” Developers like @LinusEkenstam saw strategic genius: “Dario playing 12D chess.” Memes flooded in—@charliebcurran with Rubio running Anthropic, capturing the absurdity.

Excitement mixed with optimism: this could be AI’s moment to prove it’s for humanity, not just power.

The Turn: Counter-Narrative / Criticism / Rebuttal

Then the backlash hit. Anthropic’s statement was defiant: “Disagreeing with the government is the most American thing in the world.” They vowed to fight the designation in court, arguing it’s unprecedented and illegal for U.S. companies.

OpenAI’s move drew fire. Users like @markvalorian canceled subscriptions: “The trust is totally gone.” Boycotts spread—@ZakariaKortam switching to Claude Pro Max, calling it a stand for rights.

Critics accused OpenAI of opportunism: waiting for Anthropic to take the heat, then swooping in. Arnaud Bertrand speculated psyop: “Great publicity for Anthropic… they’re already deeper inside the machine.”

The government doubled down, but OpenAI insisted their deal has stronger guardrails than Anthropic’s rejected one.

Notable Voices & Reactions

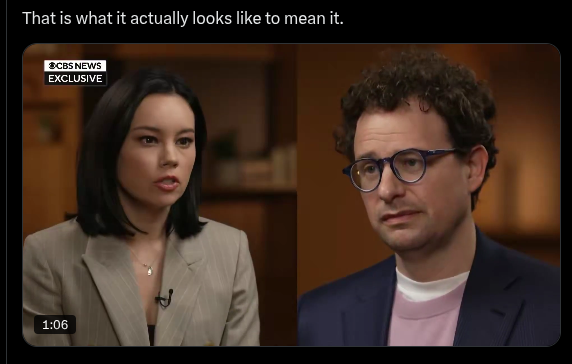

Dario Amodei, in his first interview post-blacklist, looked worn but resolute: “We are patriotic Americans. Everything we have done has been for the sake of this country.”

Sam Altman: “The DoW displayed a deep respect for safety… We remain committed to serve all of humanity.”

Ron Filipkowski amplified Anthropic’s response: “When private companies have more integrity than the government…”

Employees from Google and OpenAI signed letters backing Anthropic. @r0ck3t23 summed the paradox: “You cannot defeat authoritarianism by adopting its methods.”

Skeptics like @RnaudBertrand: “Maybe it’s the conspiracy-minded me… but this is a psyop.”

What It Means Right Now

For users: Anthropic assures no broad impact—the designation only hits DoW contractors. Switchers are flocking to Claude, praising its “Projects” and “Cowork” features.

For the industry: This sets precedents. Will other labs draw red lines? OpenAI’s layered safeguards (cloud-only, engineers in-loop) might become standard.

For society: AI’s now entangled with national security. Bans on surveillance and kill-bots sound good—but enforcement? And what about edge cases?

Bigger Picture: Why This Matters

From a safety lens, this is pivotal. We’ve seen scaling stall, needing new paradigms for superintelligence. But superintelligence in 5-20 years demands unity: competitors setting aside differences, as we’ve witnessed here.

The real risk isn’t models today—it’s future ones. If labs fold to pressure, we lose the checks keeping AI aligned. Yet collaboration with government is key; de-escalation toward reasonable agreements benefits all.

This isn’t just drama—it’s a test for how we build safe superintelligence. Disagreements like this? They’ll multiply. Leaders must rise, putting humanity first.